Blog

How do AI code review bots detect complex bugs that a human reviewer might miss consistently?

The technical mechanisms behind AI's advantage in finding subtle code issues

Alex Mercer

Feb 27, 2026

Human code reviewers are excellent at evaluating design decisions and understanding business logic. But they consistently miss certain bug categories that AI code review tools reliably catch.

Research shows that AI-generated code contains 1.7 times more issues than human-written code, according to studies analyzing production codebases. Yet many of these issues slip through human review because they require tracking data flow across dozens of function calls or examining rare combinations of conditions that humans don't naturally consider.

Instead of asking whether AI is smarter, the better question is what AI code review bots can evaluate systematically that humans might miss under time pressure.

The answer involves specific technical capabilities: interprocedural analysis, exhaustive path exploration, and pattern recognition trained on billions of lines of code.

TLDR

AI-powered code review tools detect complex bugs that humans miss through:

Interprocedural data-flow analysis that traces variables across 10-20 function calls to find hidden security vulnerabilities

Exhaustive path exploration examining thousands of execution paths humans can't mentally simulate

Pattern recognition trained on billions of code examples, identifying subtle vulnerability variants

Cross-file dependency tracking catches integration bugs spanning multiple modules

Consistent attention that doesn't degrade after reviewing hundreds of lines

Automated PR review tools like cubic analyze entire repositories to catch these issues, while human reviewers typically examine only the changed code in isolation.

Why human reviewers miss complex bugs consistently

Human code review has predictable blind spots. Even experienced reviewers run into cognitive limits when working through complex systems. It’s a human constraint, not a capability problem.

1. Limited mental simulation

When reviewing code, humans mentally simulate execution. But this simulation is shallow. A reviewer might trace through 2-3 function calls. Beyond that, they lose track of the state.

Complex bugs often hide in chains of 10-15 function calls where data transforms subtly at each step. By call 7 or 8, the reviewer has forgotten details from call 2.

2. Inability to explore all paths

Code has many execution paths determined by conditionals, loops, and exception handling. Humans focus on the happy path and maybe one or two error cases.

Bugs that only appear when three specific conditions align (feature flag on, cache warm, specific user role) get missed. Humans don't systematically enumerate all possible combinations.

3. Review fatigue

Research on code review effectiveness shows that after 60 minutes of continuous review, defect detection rates drop significantly. Large pull requests receive progressively worse reviews as reviewers fatigue.

AI code assistants don't experience fatigue. They apply the same analytical rigor to line 500 as they did to line 1.

How AI detects bugs through interprocedural analysis

The most powerful capability AI brings to code review is interprocedural analysis, which tracks how data flows through entire programs.

What interprocedural analysis means

Interprocedural analysis traces variables across function boundaries. When a value enters a function, gets modified, is passed to another function, is transformed again, and is eventually used in a potentially dangerous way, AI tracks that entire journey.

Research on taint tracking shows this is essential for finding security vulnerabilities. User input might be sanitized in 9 out of 10 code paths, but may be missed on one rare path. Interprocedural analysis finds the missed path.

Taint tracking across function calls

Taint tracking marks data from untrusted sources (user input, network data, file contents) and follows it through the program. If tainted data reaches a security-sensitive sink (database query, command execution, file operations) without proper validation, the AI flags it.

Example scenario:

User input → API handler → middleware → business logic → helper function → database query

If the sanitization happens in middleware, but a specific code path bypasses middleware under certain conditions, the vulnerability exists. Human reviewers rarely trace this manually. AI does it systematically.

Cross-file dependency tracking

Bugs that span multiple files are particularly hard for humans to catch. A change in one module breaks an assumption made in another module that the reviewer never looks at.

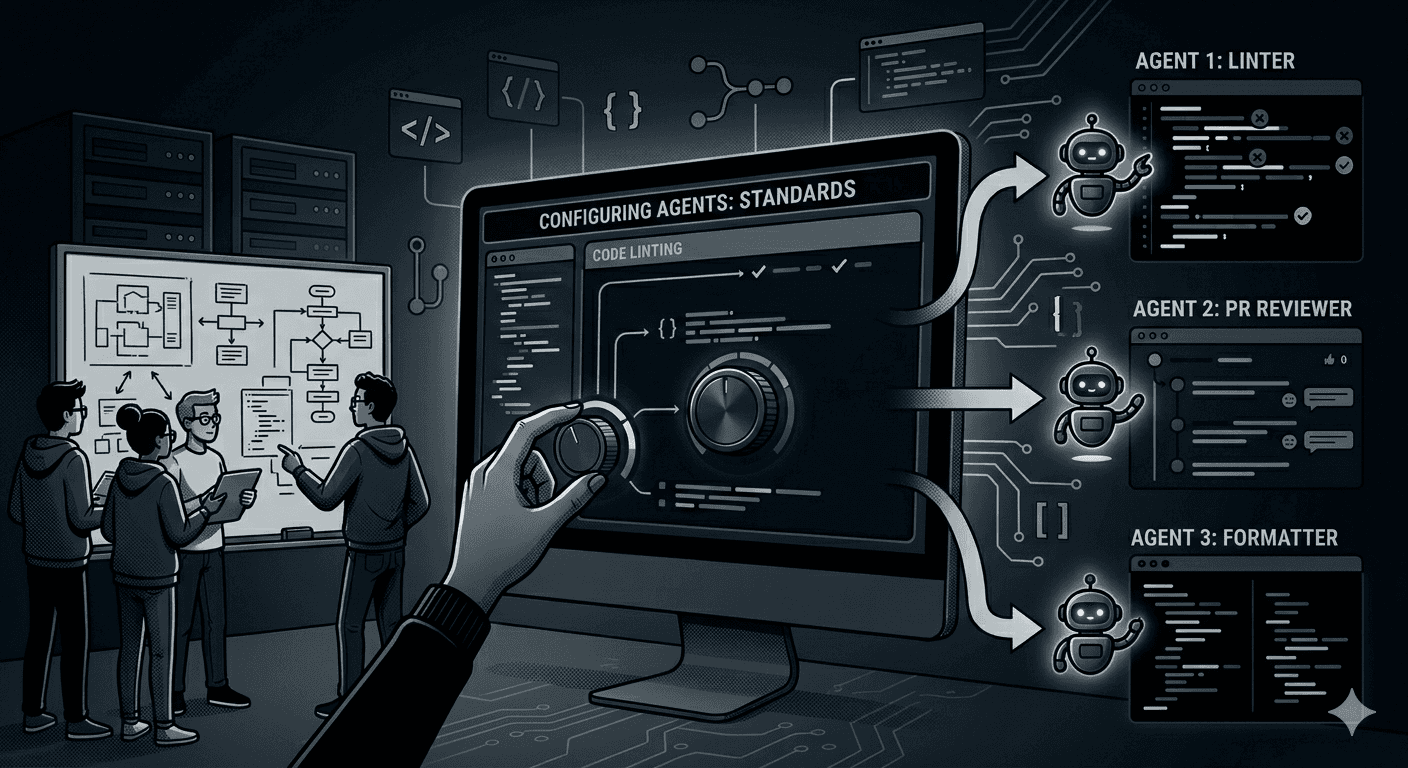

AI-powered code review tools analyze entire repositories, not just PR diffs. Tools like cubic's codebase scans continuously run thousands of agents across your full repository to find issues that don't show up when examining individual changes in isolation.

AI explores more execution paths than humans

AI explores exponentially more execution paths than humans during review.

Symbolic execution techniques

Automated code review tools use symbolic execution to explore what happens under different input combinations. Instead of running code with specific values, they use symbolic values representing ranges of possibilities.

This reveals bugs that only trigger under specific conditions. Race conditions that need precise timing. Integer overflows that only happen with particular input ranges. Null pointer exceptions that occur when optional parameters are omitted.

Research shows that bugs requiring 3+ conditions to align are rarely caught by human review but get flagged reliably by tools using symbolic execution.

Statistical path analysis

Some Automated PR review approaches use statistical methods trained on millions of code examples. They recognize patterns associated with bugs even when the exact mechanism isn't obvious.

Code that "looks similar" to code that caused problems in other projects gets flagged. This catches bugs that don't fit known vulnerability patterns but still represent elevated risk.

Pattern recognition at massive scale

Modern AI-based code review tools are trained on billions of lines of open-source code plus documented vulnerabilities from CVE databases.

Learning from known vulnerabilities

Security-focused code review online tools have seen thousands of real-world exploits. They recognize subtle variations of vulnerability patterns that humans wouldn't identify without extensive security experience.

For example, prototype pollution in JavaScript has dozens of variant forms. AI trained on the full spectrum of these variants catches new instances that don't exactly match any single pattern.

Recognizing anti-patterns

Beyond security vulnerabilities, AI detects code patterns that correlate with bugs. High complexity combined with low test coverage. Frequent modifications to the same files. Error handling that differs from similar code elsewhere in the project.

These aren't deterministic bugs but risk indicators. Human reviewers struggle to maintain awareness of codebase-wide patterns. AI makes these connections automatically.

Where AI delivers more consistency than human review

Code review online platforms maintain consistent review quality that humans can't match over extended periods.

No attention degradation

Human focus degrades after examining hundreds of lines of code. Small details get overlooked. Pattern recognition weakens.

Codacy alternatives and similar automated tools review 1,000 lines with the same thoroughness they apply to 10 lines. The 500th line gets analyzed as carefully as the first.

Always checking everything

Humans make judgment calls about what to review carefully. They might skim test files, assuming tests are less risky. They might skip reviewing generated code.

AI doesn't skip anything unless explicitly configured to. Every line gets analyzed. This catches bugs in unexpected places.

Memory of past issues

When similar code caused bugs before, AI remembers and flags similar patterns. Human reviewers might not remember a bug from six months ago or might not recognize the similarity.

This organizational memory compounds over time. The longer a team uses AI code review, the more historical context informs current reviews.

Where AI code review still falls short

AI code review is powerful but not perfect. Understanding limitations helps set appropriate expectations.

Understanding business logic

AI struggles with bugs that violate business rules rather than programming rules. If an e-commerce system should prevent orders above $10,000 without manager approval, AI might not catch that the code allows $9,999.99 orders to bypass approval.

Human reviewers better understand whether code matches business requirements.

Novel vulnerability classes

AI is trained on known bug patterns. Entirely new categories of vulnerabilities that don't resemble anything in the training data might get missed.

As new frameworks and languages emerge, AI needs retraining to understand their specific vulnerability patterns.

False positives from deep analysis

Interprocedural analysis sometimes follows paths that can't actually execute in practice. The AI flags a vulnerability that technically exists but can't be triggered because of constraints the analysis didn't understand.

This is why valuable AI code review insights require not just finding potential issues but prioritizing them by actual risk.

How modern AI code review tools combine techniques

The most effective AI code review platforms don't rely on a single technique. They combine multiple approaches.

Multi-layer analysis

Start with fast static analysis to catch obvious issues. Apply deeper interprocedural analysis to security-critical code paths. Use ML models to recognize subtle patterns. Employ symbolic execution for complex conditional logic.

Each layer catches different bug categories. The combination provides comprehensive coverage.

Continuous learning

Tools that learn from team feedback improve over time. When developers mark findings as false positives, the system adjusts. When they confirm real bugs, it recognizes similar patterns.

This feedback loop makes AI code review increasingly aligned with what actually matters to your team.

Integration with development workflow

Finding bugs is only valuable if developers fix them. The best automated PR review tools integrate directly into pull requests, providing actionable feedback where developers already work.

One-click fixes for simple issues reduce friction. Clear explanations help developers understand and fix complex problems.

Making AI code review work for your team

AI code review bots detect complex bugs through technical capabilities humans can't match: interprocedural analysis across dozens of function calls, exhaustive exploration of thousands of execution paths, and pattern recognition trained on billions of code examples.

These capabilities are most valuable when integrated into team workflows. AI handles mechanical analysis. Humans focus on design and business logic. Together, they catch significantly more bugs than either approach alone.

The key is choosing tools that provide actionable insights, learn from your feedback, and integrate seamlessly into existing processes.

Ready to catch bugs human reviewers consistently miss?

Try cubic and experience an AI code review that analyzes your entire repository to find complex issues.