Blog

cubic is #1 AI code reviewer on Code Review Bench

61.8% F1 on Martian's independent code review benchmark

Paul Sangle-Ferriere

Mar 25, 2026

The Benchmark

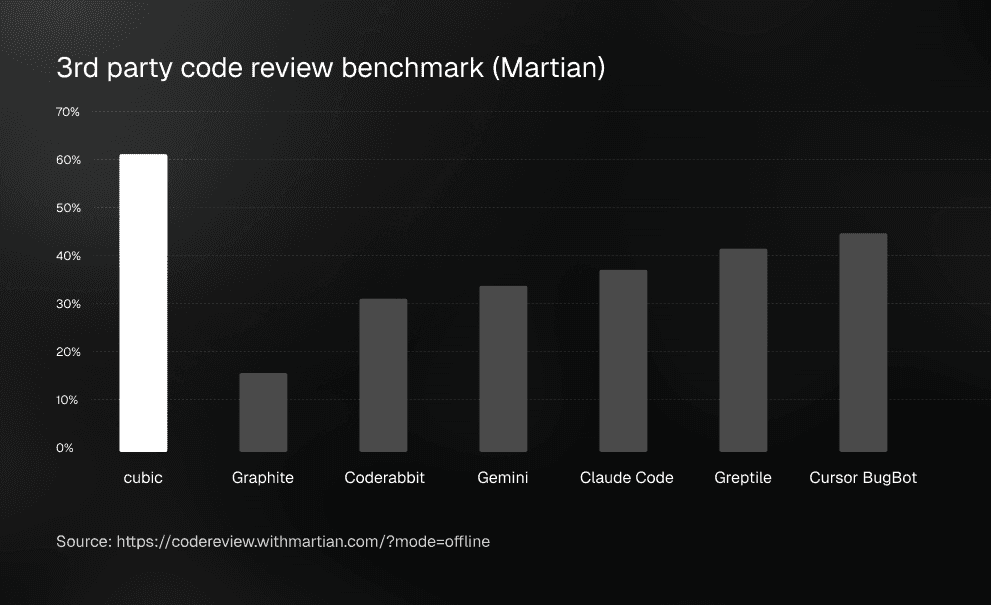

Martian's Code Review Benchmark is currently the most comprehensive third-party evaluation for AI code review agents.

It tests tools on real-world pull requests and measures how well they catch actual bugs (Recall) without overwhelming developers with false positives (Precision).

The Leaderboard

Rank | Agent | F1 Score | Precision | Recall |

|---|---|---|---|---|

#1 | cubic | 61.8% | 56.3% | 68.6% |

#6 | Cursor Bugbot | 45.5% | 47.2% | 43.8% |

#13 | Claude Code Reviewer | 37.6% | 34.8% | 40.9% |

#16 | Gemini | 33.9% | 31.1% | 37.2% |

#17 | CodeRabbit | 30.3% | 24.7% | 39.4% |

Source: codereview.withmartian.com

cubic scores 16.3 percentage points above the next well-known tool on the list.

The metric matters: Why F1?

In AI code review, optimizing for just one metric creates a terrible developer experience.

If you only optimize for Recall (catching every possible bug), the agent will flag every minor nitpick and hallucinate issues. Developers get buried in noise and start ignoring the bot entirely.

If you only optimize for Precision (only commenting when 100% certain), the agent becomes too timid and misses the complex, architectural bugs that actually break production.

F1 score is the harmonic mean of both. To score a 61.8% F1, an agent has to consistently find real bugs while maintaining a high signal-to-noise ratio.

How we achieved this

Most AI code review tools run a single LLM pass over the git diff. That approach hits a ceiling quickly. To break past the 40% F1 barrier, we had to change how the agent interacts with the code.

1. Continuous A/B testing and model routing

We constantly run experiments to understand which models perform best for specific use cases. Tools like Claude Code are locked into using one specific model. We aren't. We route different parts of the review process to the best model for that specific situation, optimizing the pipeline far beyond what a single model can achieve.

2. Full codebase context, not just diffs

A diff doesn't tell you if a new function breaks an architectural pattern established three folders away. cubic analyzes the full codebase context to understand cross-file dependencies and systemic rules, not just local syntax.

3. Adaptive learning

Out of the box, AI doesn't know your team's unwritten rules. We built cubic to learn from developer behavior. When reviewers accept or dismiss suggestions, the system adapts its internal rules engine. Over time, it stops making generic suggestions and starts reviewing like your senior engineers.

Rerunning the results is easy

The benchmark is entirely public and independent. You can view the full methodology, task-level results, and leaderboard at codereview.withmartian.com.

Thousands of teams at companies like n8n, Resend, and Granola already use cubic in production. If you want to see how it performs on your own repositories, you can install it in about a minute.

Try the best AI code reviewer yourself