Blog

Coding analytics | Launch Week 03, Day 5

You can now measure AI coding output across every tool your team uses. Line-level attribution shows what's AI-written, what's human-edited, and whether AI-heavy PRs ship with more or fewer issues.

Victor Mier

Apr 17, 2026

AI coding agents write most of the code for your team, but how much of it is AI-written? Which tools and models are your team using? And how good is the generated code?

We are releasing AI Coding Analytics beta to help you answer all these questions.

You can now measure AI coding output across every tool your team uses. Line-level attribution shows what's AI-written, what's human-edited, and whether AI-heavy PRs ship with more or fewer issues.

What you’ll get:

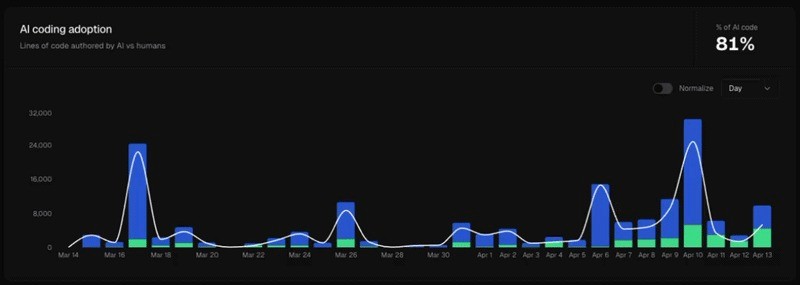

AI coding adoption

Lines of code authored by AI, humans, or mixed (AI and edited by a human).

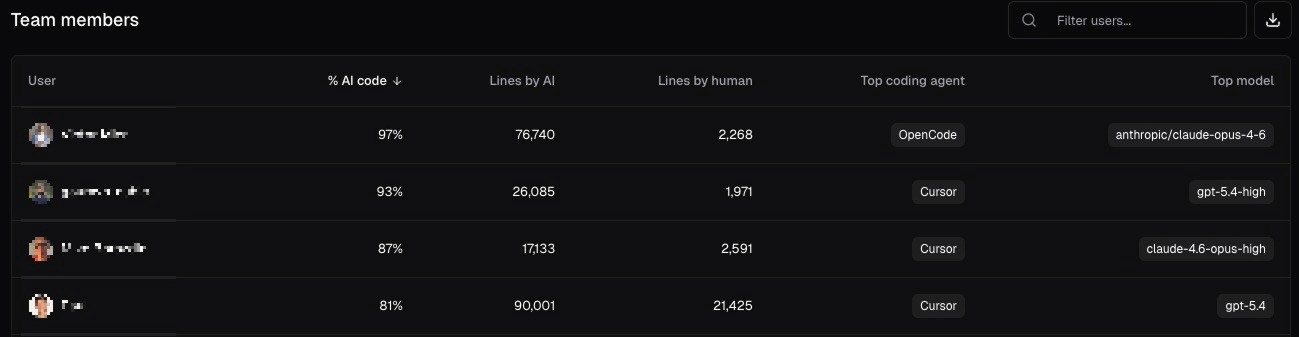

Team members

Get the usage breakdown for every developer on your team: % of AI code, preferred tool and model.

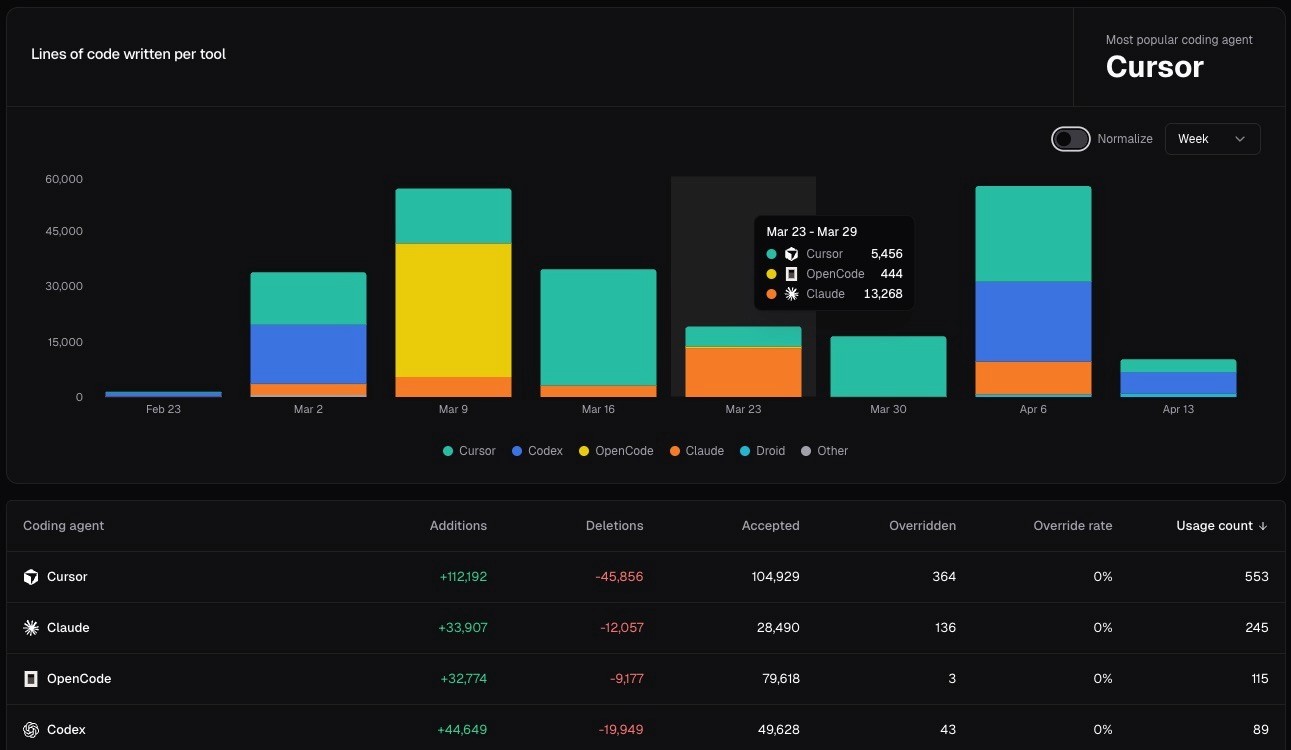

AI coding agents

What tools and models are used by your team, and how they evolve over time.

Quality and review burden by AI contribution

The number of bugs introduced by AI code vs humans, broken down by tool and model.

Check all the details in the docs. As with all of our analytics, all data can be filtered by repository and time period.

How does it work?

We partnered with git-ai (an open-source AI code tracking utility), which ships alongside the CLI.

It installs hooks for the most popular coding agents, which save attribution data for every line that gets written into git notes.

Those git notes get pushed to GitHub alongside your commits, and we parse and store the data on merge, so we only track data for code that gets into production.

Installation

Just install the cubic CLI, and it will automatically start tracking AI code attribution for the most popular tools:

curl -fsSL https://cubic.dev/install | bash

If you already have cubic installed, make sure you’re on the latest version by upgrading:

cubic upgrade

Ensure it's on by running cubic stats status.

Available today on Team and Pro plans.

https://docs.cubic.dev/analytics/ai-coding